We often think of cognitive science as a flashlight, illuminating the hidden machinery of how we learn. But what if that flashlight is actually a molding tool? When we use “Representational Cognitive Science” to understand education, we rely heavily on proxies, test scores, reaction times, or digital engagement metrics, to tell us if a student is “learning.”

The danger is that these measures might not just be tracking reality; they might be creating a new, artificial one.

The Map is Not the Territory

In the world of cognitive representations, we assume the mind works by processing internal symbols. To “see” this happening, researchers and educators use proxy measures. We can’t see a “memory trace” forming, so we measure how quickly a student can recall a list of words in a quiet lab.

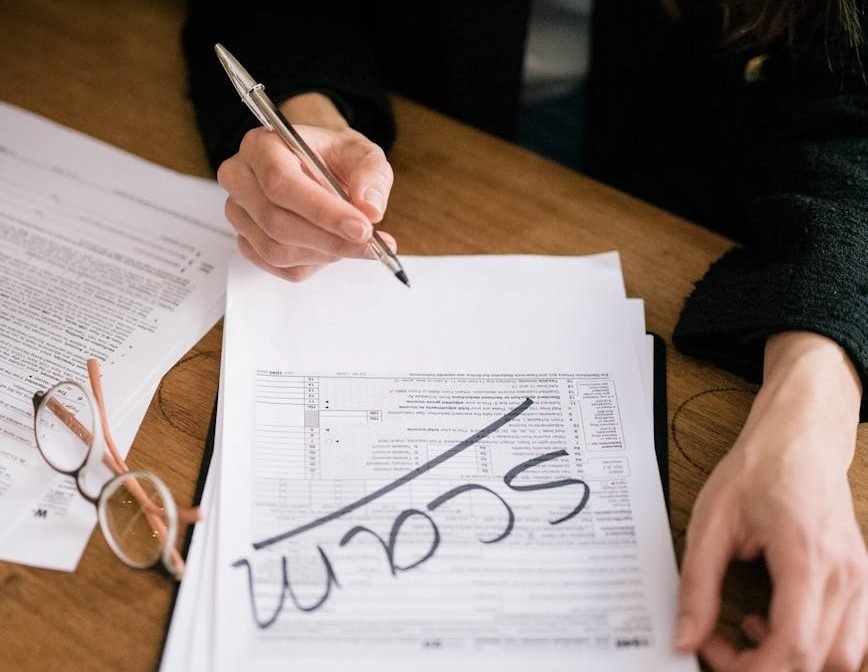

The problem? These measures often have very little real-world value. A student might become an expert at “the test”, manipulating the specific symbols the proxy looks for, without actually gaining the deep, messy, and contextual understanding needed to solve a problem in the wild.

The Looping Effect: When Students Become the Measure

This is where philosopher Ian Hacking enters the room with a warning. He described something called the “Looping Effect.” It’s a feedback loop between the scientist and the subject.

- The Definition: We define a “successful learner” based on a specific cognitive representation (e.g., “High Working Memory Capacity”).

- The Filter: We build classrooms and software that reward students who mimic the behaviors associated with that measure.

- The Adaptation: Students, being adaptable humans, begin to perceive themselves through these categories. They internalize the label. They change their study habits, their self-image, and even their cognitive approach to fit the “ideal” model.

- The Confirmation: Because the students have changed to fit the category, the original (and perhaps arbitrary) measure now looks like a “natural law” of science.

We didn’t discover a truth about the human mind; we pressured the mind into a specific shape.

Learning for the Proxy, Not the Life

In modern education, this is a massive risk. If we use cognitive models to create “personalized learning” algorithms, we might be narrowing the student’s potential. If an algorithm decides a student is a “visual learner” or has a “short attention span” based on proxy clicks, and then only feeds them content that fits that profile, the student becomes that profile.

We are essentially training students to be better at being “data points.” We optimize for the proxy, but the actual human capability—the ability to think critically across domains or handle ambiguity, gets left behind because it’s too hard to represent in a clean, cognitive model.

The Big Picture

We have to ask ourselves: are we using cognitive science to help students grow, or are we using it to build a more efficient “loop” that turns them into predictable subjects?

If we focus too much on the representation and not the reality, we risk graduating a generation of people who are statistically perfect on paper but lack the rugged, unquantifiable skills that real life demands.